The MCP Spec Is Converging on MCPProxy's Architecture

Algis Dumbris • 2026/03/27

When the Spec Catches Up to the Implementation

There is a particular kind of validation that happens when you build something based on first principles and then watch the standards community arrive at the same conclusions independently. It does not happen because you predicted the future. It happens because the constraints are real, the failure modes are observable, and anyone who takes the problem seriously will eventually converge on the same set of solutions.

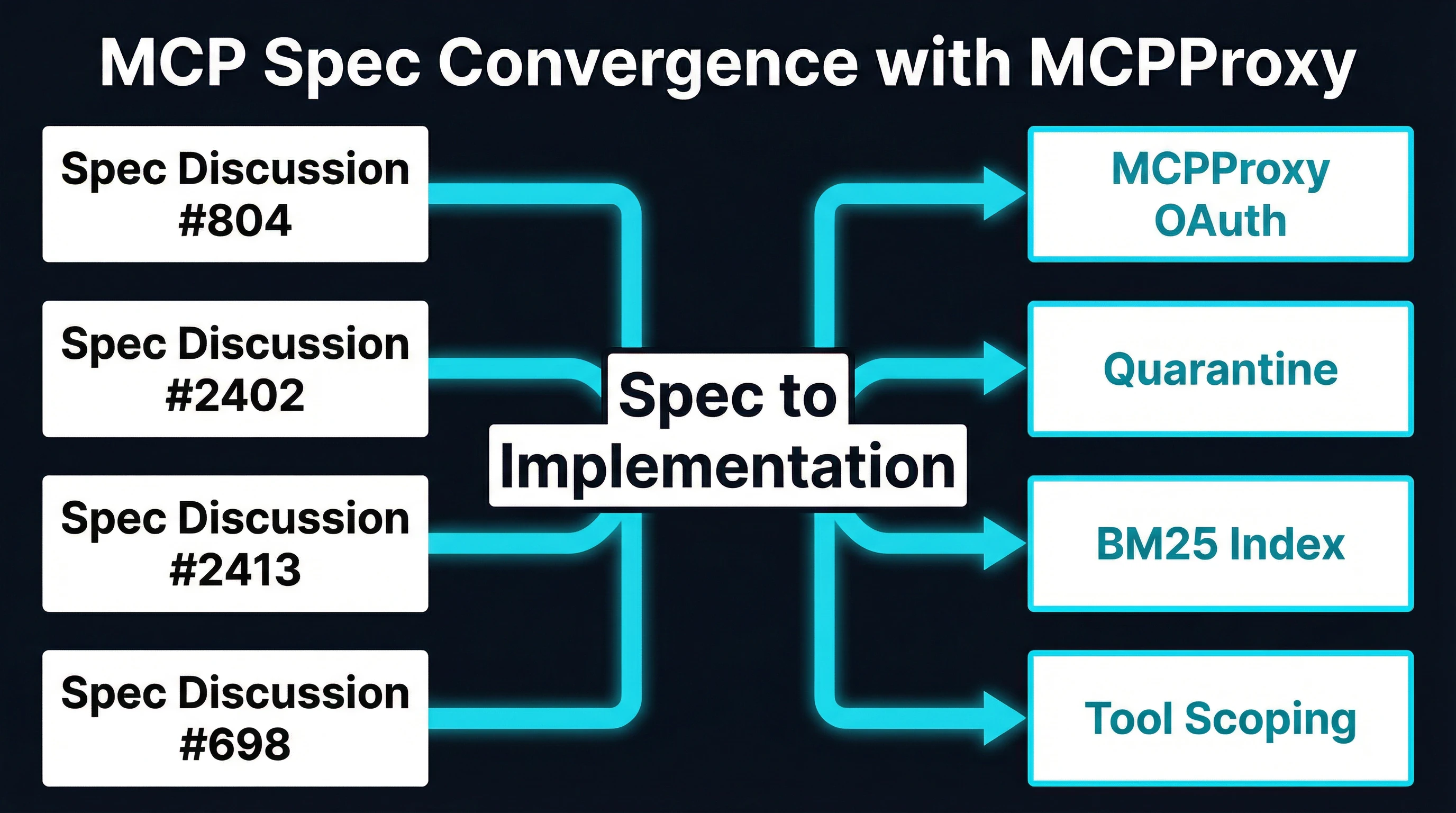

Over the past several months, four discussions in the MCP specification repository have been gaining traction. Each one describes a pattern or capability that the spec currently lacks — and each one describes something MCPProxy has been shipping in production since its first release. These are not obscure edge cases. They are foundational concerns about authorization, integrity, discovery, and scoping that every MCP deployment eventually encounters.

The discussions are #804 (Gateway-Based Authorization), #2402 (Tool Integrity), #2413 (Service Registry), and #698 (User-Scoped Tool Discovery). Together, they form a coherent picture of where the MCP specification is heading. And together, they describe an architecture that already exists.

Discussion #804: Gateway-Based Authorization

The MCP protocol, as originally specified, treats authorization as a concern for individual servers. Each server handles its own authentication, its own token management, its own permission model. This works fine when an agent connects to a single server. It falls apart when agents connect to dozens.

Discussion #804 proposes a gateway layer that centralizes authorization. Instead of each server implementing OAuth flows, token validation, and permission checks independently, a gateway sits between the client and all upstream servers, handling authorization uniformly. The gateway validates the agent’s identity once, maps that identity to a set of permissions, and enforces those permissions across every tool call regardless of which upstream server handles it.

The arguments in the discussion are compelling and practical. Enterprise teams cannot audit twenty different authorization implementations across twenty MCP servers. Security teams cannot enforce consistent policies when each server defines its own permission model. Compliance requirements demand a single enforcement point that can be monitored, logged, and audited. The discussion participants are converging on the idea that MCP needs a first-class concept of a gateway — an intermediary that owns the authorization boundary.

What MCPProxy Ships Today

MCPProxy has operated as exactly this kind of gateway since its initial release. When you run mcpproxy serve, you are starting a gateway that sits between your MCP client and all upstream servers. Authorization is handled at the gateway level using OAuth 2.1 with per-agent tokens. Each agent authenticates once against MCPProxy. MCPProxy then manages the connections to upstream servers, applying consistent authorization policies across all of them.

This is not a theoretical capability or a roadmap item. It is the core architecture. Every tool call flows through the gateway. Every authorization decision is made in one place. Every access event is logged in a single audit trail. The gateway does not just pass traffic through — it owns the security boundary.

The per-agent token model means that different agents can have different permission sets. A research agent might have read-only access to database tools but no access to filesystem tools. A deployment agent might have access to CI/CD tools but not to communication tools. These permissions are defined once in MCPProxy’s configuration and enforced uniformly, regardless of what the upstream servers themselves allow.

Discussion #2402: Tool Integrity

Discussion #2402 addresses a problem that the MCPwned demonstrations at RSAC made viscerally real: tool definitions can change. An MCP server declares a set of tools when it connects. The agent sees those tool names, descriptions, and parameter schemas and builds its understanding of what each tool does. But nothing in the current spec prevents the server from changing those definitions mid-session.

This is the rug pull attack. A server initially declares a read_file tool with an honest description. The agent trusts it. Later in the session, the server silently changes the tool’s behavior — or, more subtly, changes its description to include prompt injection payloads that redirect the agent’s behavior. The agent has no mechanism to detect this change because the spec provides no concept of tool integrity.

The discussion proposes schema pinning, hash verification of tool definitions, and signature-based trust anchors. The idea is straightforward: when a tool is first declared, its schema is recorded and hashed. Subsequent calls verify that the schema has not changed. If it has, the tool is flagged, suspended, or requires explicit re-approval.

What MCPProxy Ships Today

MCPProxy’s quarantine system is a working implementation of this exact pattern, and it goes further than what the discussion currently proposes. When a new upstream server is added via mcpproxy upstream add, every tool from that server enters quarantine automatically. Quarantined tools exist in MCPProxy’s registry, but they are invisible to the agent. The agent cannot call them, cannot see their descriptions, and cannot even discover that they exist.

An administrator must explicitly approve each server’s tools with mcpproxy upstream approve before the agent gains access. This is not a warning or a soft gate. It is a hard deny-by-default boundary. The tool does not exist in the agent’s world until a human says it does.

But quarantine is not just an onboarding mechanism. MCPProxy continuously monitors tool definitions from upstream servers. If a server changes a tool’s schema, description, or parameters after approval, MCPProxy detects the change and can re-quarantine the affected tools. This is the runtime integrity check that discussion #2402 is proposing — except MCPProxy does not just detect the change. It enforces a response.

The architectural difference is important. Schema pinning alone tells you that something changed. Quarantine tells you that something changed and prevents the agent from interacting with the modified tool until a human reviews the change. In a world where rug pull attacks are demonstrated at major security conferences, detection without enforcement is insufficient.

Discussion #2413: Service Registry

As the MCP ecosystem grows, a new problem emerges: discovery. When an organization has hundreds of MCP servers offering thousands of tools, how does an agent find the right tool for a given task? The current spec assumes that the client knows exactly which servers to connect to and which tools to call. This assumption does not survive contact with production.

Discussion #2413 proposes a service registry — a centralized index of available tools, their capabilities, their metadata, and their availability. The registry would allow agents to search for tools by description, filter by capability, and discover new tools without manual configuration. It is essentially a catalog that makes the MCP ecosystem navigable.

The discussion draws on familiar patterns from service mesh architectures. Just as Consul or Eureka provide service discovery for microservices, an MCP service registry would provide tool discovery for agents. The registry needs to be queryable, it needs to stay current as servers come and go, and it needs to support some form of relevance ranking so agents find the best tool rather than just any tool.

What MCPProxy Ships Today

MCPProxy’s BM25 index is a local service registry that has been solving this problem since the project’s first version. Every tool from every connected upstream server is indexed using BM25 term-frequency scoring. When an agent needs a tool, MCPProxy scores all available tools against the agent’s query and returns the most relevant matches.

This is not vector search. It does not require embeddings, a GPU, or an external API. BM25 operates on the structured, keyword-rich text of tool names and descriptions — exactly the domain where term-frequency scoring excels. It runs in microseconds on commodity hardware. It requires zero configuration. It produces accurate results because tool descriptions are written to be precise, not conversational.

The BM25 index updates dynamically as servers connect and disconnect. When a new server is added and its tools are approved out of quarantine, those tools immediately become discoverable. When a server disconnects, its tools are removed from the index. The registry is always current, always local, and always fast.

The practical impact is significant. An agent connected to MCPProxy does not need to know which server provides a database query tool, which server provides a file management tool, and which server provides a deployment tool. It describes what it needs, and MCPProxy finds the best match across all connected servers. This transforms MCP from a point-to-point protocol into a service mesh for AI tools.

Discussion #698: User-Scoped Tool Discovery

Discussion #698 tackles the permission dimension of discovery. Even if a registry exists, not every user should see every tool. A junior developer should not discover tools that deploy to production. An external contractor should not discover tools that access internal databases. The discussion proposes that tool visibility should be scoped to the user’s identity and permissions — what you can discover should depend on who you are.

This is a natural extension of both the gateway authorization model from #804 and the registry model from #2413. If the gateway controls access and the registry controls discovery, then user-scoped discovery means the registry respects the gateway’s access policies. You should only discover tools you are allowed to use.

What MCPProxy Ships Today

MCPProxy’s per-agent token system inherently provides user-scoped tool discovery. When an agent authenticates with MCPProxy, it receives a token that maps to a specific set of permissions. The BM25 search only returns results from the set of tools that agent is authorized to access. An agent with restricted permissions will never see tools it cannot call — not because those tools are hidden by a filter, but because they are not included in that agent’s searchable index.

This is scoping at the architecture level rather than scoping at the presentation level. There is an important difference. A presentation-level filter shows you a filtered view of a complete registry. If the filter has a bug, restricted tools become visible. An architecture-level scope means the restricted tools are never in the search space to begin with. The agent’s BM25 index is constructed from its authorized tool set. There is nothing to leak because the unauthorized tools were never indexed for that agent.

The Pattern: Spec Describes, MCPProxy Implements

These four discussions are not isolated proposals. They form a coherent architecture:

- A gateway that centralizes authorization and acts as the security boundary (#804)

- Integrity verification that detects and responds to tool definition changes (#2402)

- A service registry that makes tools discoverable across servers (#2413)

- User-scoped discovery that ensures visibility matches permissions (#698)

Read as a single design document, this is MCPProxy’s architecture. Not because MCPProxy influenced these discussions, but because the problems are real and the constraints lead to the same set of solutions. If you need centralized auth, you need a gateway. If you need integrity, you need quarantine. If you need discovery, you need an index. If you need scoping, you need per-identity views of that index.

The convergence is not coincidental. It is inevitable. These are the same conclusions that anyone building production MCP deployments reaches after encountering the same failure modes: fragmented authorization across dozens of servers, rug pull attacks exploiting mutable tool definitions, agents unable to find the tools they need, and users discovering tools they should never have access to.

What This Means for MCPProxy’s Roadmap

The specification discussions validate MCPProxy’s architectural choices, but they also create an opportunity. As these patterns are formalized in the spec, MCPProxy can serve as a reference implementation — a working system that demonstrates how the proposed patterns operate in practice.

This means several things for the project’s direction:

Spec compliance. As the discussions mature into formal spec additions, MCPProxy will align its implementation with the exact wire-format and API conventions the spec defines. The architecture will not change, but the interfaces will match what the spec mandates. Interoperability matters more than being first.

Contribution. MCPProxy’s experience shipping these patterns in production is directly relevant to the spec discussions. Edge cases that emerge from real deployments — how quarantine interacts with long-running sessions, how BM25 ranking handles tool name collisions, how per-agent tokens behave during token refresh — are the kind of implementation feedback that specification authors need.

Validation. Every MCPProxy deployment is a test of the architecture these discussions propose. When the spec formalizes gateway-based authorization, there will already be hundreds of MCPProxy instances running that pattern. When tool integrity becomes a spec requirement, MCPProxy’s quarantine system will have months of production data on how schema changes actually manifest in the wild.

Building Toward the Same Thing

The MCP specification is evolving quickly. Six months ago, it was a protocol for connecting AI agents to tools. Today, the community is actively designing the security, governance, and discovery layers that production deployments require. The discussions referenced here are not aspirational wish lists. They are detailed technical proposals with growing consensus from spec authors and major implementors.

MCPProxy occupies a specific position in this evolution. It is not a spec-compliant gateway because the spec has not yet defined what a gateway should be. It is a production system that solved the problems the spec is now addressing, using approaches that the spec community is now converging on independently. That convergence is the strongest signal that the architecture is right.

If you are building MCP deployments and waiting for the spec to define security, discovery, and authorization patterns, you do not need to wait. MCPProxy ships them today. When the spec catches up, you will already be running it.

Get started:

# Install MCPProxy

go install github.com/smart-mcp-proxy/mcpproxy-go@latest

# Start the gateway

mcpproxy serve

# Add an upstream MCP server (enters quarantine by default)

mcpproxy upstream add --name my-server --url http://localhost:3000

# Review and approve tools

mcpproxy upstream list

mcpproxy upstream approve --name my-serverThe spec is converging. The implementation is already here.