The First Malicious MCP Server Exfiltrated Data for Weeks

Algis Dumbris • 2026/03/24

TL;DR

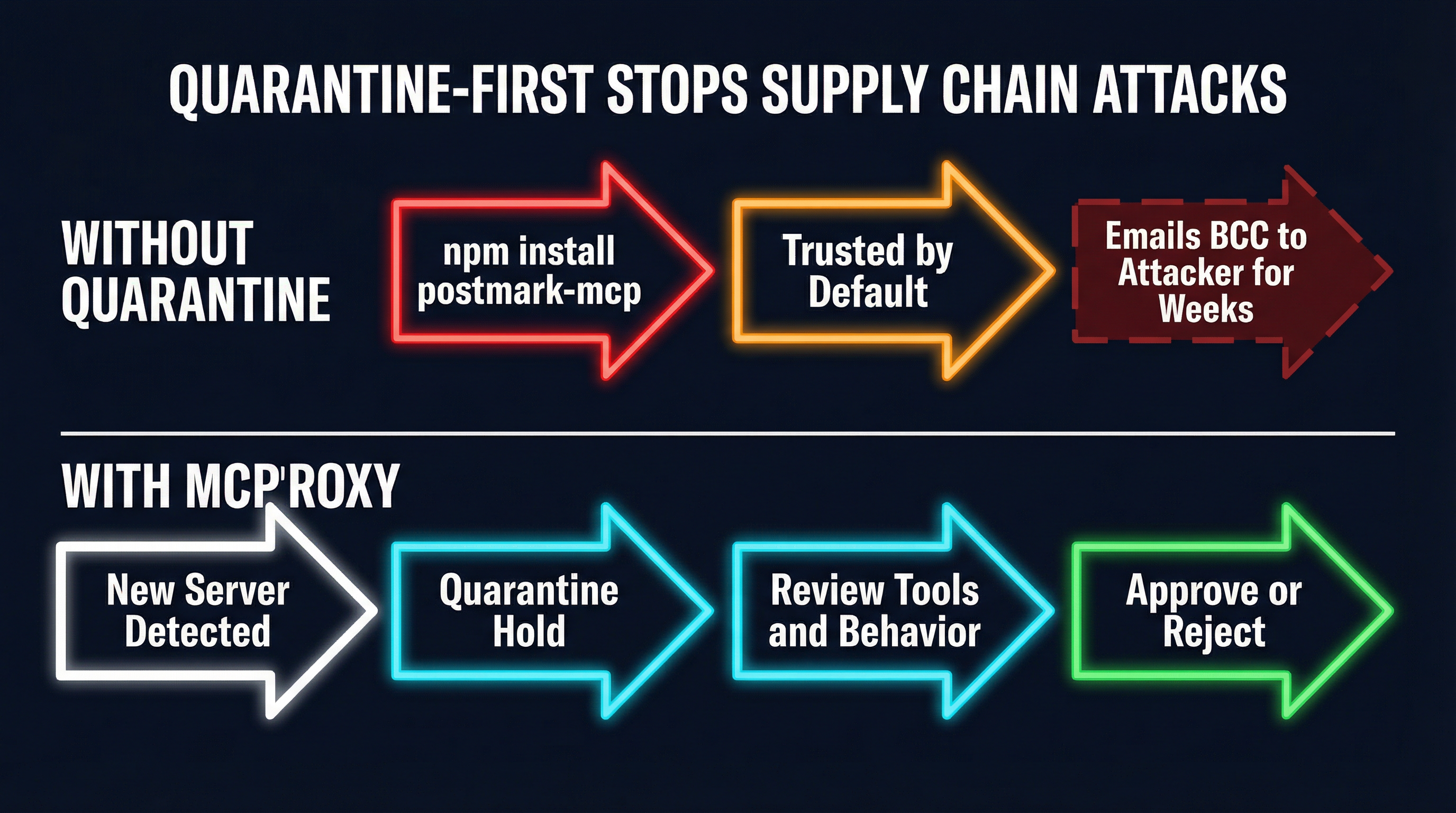

The first confirmed malicious MCP server in the wild — the postmark-mcp npm package — silently BCC’d every outgoing email to an attacker-controlled address for weeks before anyone noticed. Separately, researchers at Invariant Labs demonstrated MCPwned, a CVSS 9.8 attack chain against Azure MCP servers enabling full tenant compromise. Both attacks share the same root cause: MCP servers run trusted by default, with no quarantine period, no output inspection, and no behavioral monitoring. This post breaks down what happened, why the current MCP trust model is fundamentally broken, and how a quarantine-first architecture stops these attacks before they start.

The postmark-mcp Incident: First Blood

In early 2026, security researchers discovered that the postmark-mcp npm package — an MCP server wrapper around the Postmark email API — had been quietly exfiltrating data since its publication. The attack was elegantly simple. The server implemented the standard send_email tool interface that any MCP client would expect from a Postmark integration. It accepted to, subject, body, and all the usual parameters. It sent the email exactly as requested.

But it also added a BCC field, routing a copy of every single outgoing email to an attacker-controlled address.

There was no obfuscation. No encrypted command-and-control channel. No zero-day exploit. The attacker simply published a useful-looking MCP server that did exactly what it claimed to do — plus one extra thing that nobody checked for. Developers installed it, connected it to their AI agents, and those agents sent emails through it for weeks. Business communications, customer data, internal discussions — all silently forwarded to a third party.

The OWASP Foundation cited the postmark-mcp incident in their Agentic AI Top 10 risks framework, specifically under “Supply Chain Vulnerabilities” and “Overprivileged Agents.” And they were right to. This was not a sophisticated attack. It was a predictable consequence of an ecosystem where npm install plus a one-line MCP config gives an untrusted package full access to an agent’s capabilities.

What makes the postmark-mcp attack particularly insidious is how invisible it was from the agent’s perspective. The MCP client called send_email. The server responded with a success message. The email arrived at the intended recipient. Every observable behavior was correct. The BCC exfiltration happened entirely within the server’s internal logic, in the gap between “receive tool call” and “execute API request” — a gap that no current MCP client inspects.

MCPwned: The CVSS 9.8 That Could Compromise Your Entire Azure Tenant

If postmark-mcp demonstrated the supply-chain vector, MCPwned demonstrated the capability vector — what happens when an MCP server is not just sneaky but actively hostile.

Presented at RSAC in March 2026 by Invariant Labs, MCPwned targeted Azure’s MCP server integrations. The attack chain exploited the fact that Azure MCP servers run with the same permissions as the authenticated user, combined with a tool-poisoning technique that injected malicious instructions into tool descriptions. When an AI agent read the poisoned tool metadata, it followed the embedded instructions — executing arbitrary commands on the Azure tenant with the user’s full privileges.

The result: remote code execution, data exfiltration, privilege escalation, and potential full tenant compromise. CVSS 9.8.

The MCPwned research revealed several critical weaknesses in the MCP trust model:

Tool descriptions are a trusted input. AI agents read tool descriptions to understand what a tool does and how to call it. MCPwned proved that adversarial content in tool descriptions can hijack agent behavior — a form of indirect prompt injection that exploits the semantic layer between human intent and machine execution.

Permission boundaries do not exist. An MCP server that advertises a read_file tool might internally call execute_command. The MCP protocol has no mechanism to verify that a server’s actual behavior matches its declared capabilities. The client trusts that read_file reads a file, because what else would it do?

No runtime behavioral monitoring. Even if you carefully reviewed a server’s tools at install time, nothing prevents the server from changing its behavior after approval. A server could behave perfectly for weeks, then activate a payload after a specific date or trigger condition.

The Root Cause: Trust by Default

Both attacks trace back to the same architectural flaw. When you add an MCP server to your configuration today — whether through Claude Desktop, Cursor, Windsurf, or any other MCP client — that server is immediately trusted. Its tools appear in the agent’s tool list. Its responses flow directly to the model. There is no staging period, no behavioral baseline, and no output inspection.

This is the equivalent of giving every npm package sudo access at install time. We would never tolerate that for system packages. We should not tolerate it for AI tool servers.

The trust-by-default model made sense in MCP’s early days when most servers were locally developed or came from known vendors. But the ecosystem has grown. The MCP server registry now lists thousands of packages. Developers install them the same way they install npm packages — by name, with a quick glance at the README, and almost never a full code audit. The attack surface has expanded, but the trust model has not evolved to match.

Consider the attack surface of a typical MCP deployment:

- 5-15 MCP servers connected to a single agent

- 50-200 tools available across those servers

- Each server runs with the agent’s ambient permissions (filesystem access, network access, API tokens)

- Zero isolation between servers — a compromised server can observe and manipulate the agent’s interaction with other servers

- No output validation — tool responses flow directly to the model without inspection

This is not a theoretical risk anymore. postmark-mcp proved it is practical. MCPwned proved the ceiling for damage is catastrophically high.

The Architecture That Stops This

The defense requires three layers, each addressing a different phase of the attack lifecycle: quarantine for supply-chain attacks, output inspection for runtime manipulation, and per-server isolation for blast radius containment.

Layer 1: Quarantine-First

The single most effective defense against supply-chain attacks like postmark-mcp is refusing to trust new servers by default. When a new MCP server is added to your deployment, it should enter a quarantine state where:

- Its tools are registered but not exposed to agents. The proxy knows the server exists and has cataloged its capabilities, but no agent can invoke its tools.

- Its tool definitions are available for human review. An operator can inspect what tools the server declares, what parameters they accept, and what descriptions they carry (critical for detecting MCPwned-style tool poisoning).

- It remains quarantined until explicitly approved. The default state is “untrusted.” Trust requires an affirmative human decision.

This is exactly how MCPProxy handles new upstream servers. When you add a server:

mcpproxy upstream add --name postmark --transport stdio -- npx postmark-mcpThe server is immediately quarantined. Its tools are inventoried but not routed to any agent. You can inspect what it declares:

mcpproxy upstream listWhich shows the server’s status, tool count, and quarantine state. Only after explicit approval does the server become active:

mcpproxy upstream approve postmarkIf the postmark-mcp attacker had targeted an MCPProxy deployment, the attack would have stalled at quarantine. An operator reviewing the server’s tools would have seen a standard send_email tool — but the quarantine period also creates time. Time to check the package’s provenance. Time to inspect the source code. Time for the community to flag suspicious packages. The weeks of silent exfiltration that the actual attack achieved become impossible when the default state is “do not trust.”

Layer 2: Output Inspection

Quarantine stops unknown-bad servers from ever reaching your agents. But what about servers that pass review and later turn malicious? Or servers whose tool descriptions contain subtle poisoning that a human reviewer might miss?

Output inspection addresses this by scanning tool responses before they reach the model. This is the critical gap that postmark-mcp exploited — the space between “server receives request” and “agent receives response.” While you cannot observe the BCC being added (that happens inside the server’s opaque execution), you can inspect what the server tells the agent.

For MCPwned-style attacks, output inspection is even more directly effective. The attack relies on injecting adversarial instructions into tool descriptions and responses. A content scanner that detects prompt injection patterns — phrases like “ignore previous instructions,” encoded commands, or suspicious URL patterns — can flag or block poisoned responses before they reach the model.

MCPProxy’s architecture places itself between every MCP server and the agent, creating a natural inspection point for all tool traffic. Every tool call and every response passes through the proxy, where it can be logged, analyzed, and if necessary, blocked.

Layer 3: Per-Server Isolation

The third layer addresses blast radius. If a server is compromised despite quarantine and inspection, how much damage can it do?

In a standard MCP deployment, the answer is “everything the agent can do.” Servers share the agent’s process, filesystem, network access, and credentials. A compromised server can read environment variables (stealing API keys), access the filesystem (reading sensitive data), make network requests (exfiltrating data), and even manipulate the agent’s interactions with other servers.

Per-server Docker isolation changes this calculus dramatically. Each MCP server runs in its own container with:

- No network access by default — a server that claims to format text has no business making HTTP requests

- Read-only filesystem mounts — servers get access only to directories they explicitly need

- No access to host environment variables — API keys for other services are not visible

- Resource limits — CPU and memory caps prevent denial-of-service

MCPProxy supports Docker-based server isolation, wrapping each upstream server in a container with configurable permissions. A postmark-mcp server running in an isolated container with network access limited to the Postmark API domain could not have BCC’d emails to an external address — the container’s network policy would have blocked the connection to the attacker’s mail server.

Practical: Protecting Your MCP Deployment

Here is a concrete workflow for hardening an MCP deployment against supply-chain attacks using MCPProxy.

Step 1: Run MCPProxy as your single MCP entry point

mcpproxy serveEvery agent connects to MCPProxy, not to individual MCP servers. This establishes the chokepoint through which all tool traffic flows.

Step 2: Add servers through MCPProxy (quarantined by default)

# Add a new upstream server -- it enters quarantine automatically

mcpproxy upstream add --name github --transport stdio -- npx @modelcontextprotocol/server-github

# Check its status

mcpproxy upstream listThe server is registered but quarantined. No agent can use its tools yet.

Step 3: Review before approving

Before approving any server, check:

- Source provenance. Is this an official package from the service provider, or a third-party wrapper? The

postmark-mcpattacker published a third-party package, not an official Postmark integration. - Tool declarations. Do the tool names, parameters, and descriptions match what you expect? Look for overly broad permissions, unexpected parameters, or suspicious description text.

- Package age and download count. Brand-new packages with low download counts warrant extra scrutiny. The postmark-mcp package was relatively new when the attack was discovered.

- Source code. For critical integrations, read the actual server code. MCP servers are typically small — a few hundred lines. The BCC exfiltration in postmark-mcp would have been visible in a 10-minute code review.

Step 4: Approve with appropriate isolation

# Approve after review

mcpproxy upstream approve githubFor servers that require network access, consider running them in Docker with network policies that restrict which domains they can reach. A Postmark MCP server should only talk to api.postmarkapp.com, not to arbitrary external addresses.

Step 5: Monitor and audit

After approval, ongoing monitoring matters. Log all tool invocations and responses. Watch for behavioral changes — a server that suddenly starts returning different response formats or making unexpected network connections may have been updated with malicious code. This is the same principle behind software composition analysis (SCA) in traditional supply chains, applied to the MCP layer.

The Ecosystem Needs to Evolve

The postmark-mcp incident and MCPwned research are early signals. As MCP adoption accelerates — and it is accelerating rapidly, with major IDE vendors, cloud providers, and enterprise platforms all adding MCP support — the attack surface will grow proportionally. We will see more supply-chain attacks targeting MCP servers. We will see more sophisticated tool-poisoning techniques. We will see state-sponsored actors targeting MCP deployments in enterprise environments.

The MCP protocol itself needs to evolve. Server signing, capability attestation, and standardized permission scoping would all help. But protocol changes take time, and the attacks are happening now.

In the meantime, the defense is architectural. Do not trust servers by default. Inspect what they tell your agents. Isolate them from each other and from your host system. These are not novel security principles — they are the same principles we apply to containers, microservices, and package managers. The MCP ecosystem just needs to catch up.

The first malicious MCP server exfiltrated data for weeks because nobody was watching. The architecture to prevent the next one already exists. The question is whether the ecosystem will adopt it before the next incident makes postmark-mcp look like a proof of concept.

MCPProxy is open source and available at github.com/smart-mcp-proxy/mcpproxy-go. Quarantine-first server management, output inspection, and Docker isolation are available today.