The Three Gates of AI Infrastructure: Why MCP Needs Its Own Gateway Layer

Algis Dumbris • 2026/03/22

TL;DR

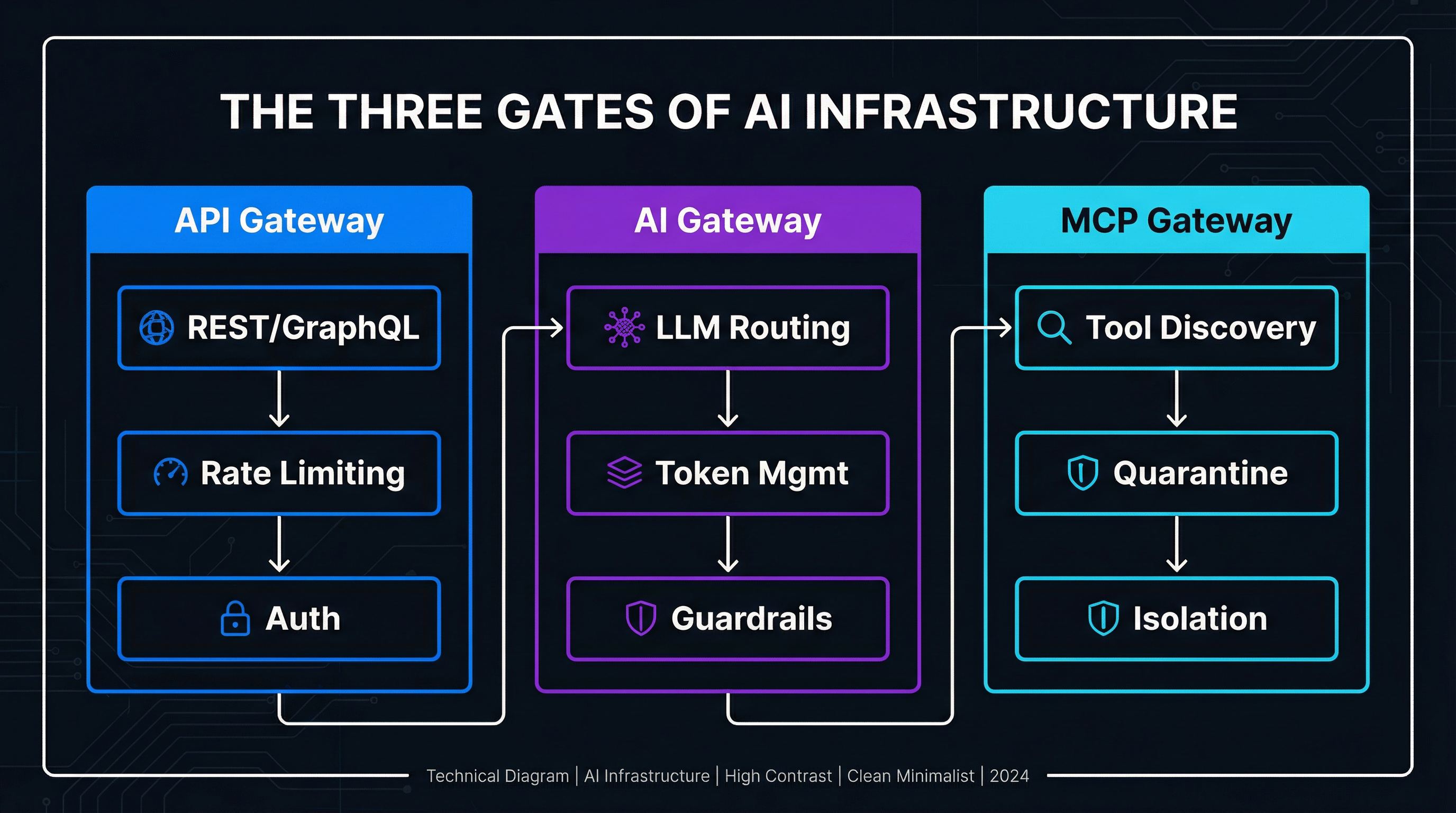

The infrastructure world is splitting into three distinct gateway layers: API gateways for REST/GraphQL traffic, AI gateways for LLM calls and token management, and MCP gateways for tool discovery and agent-tool interaction. In a single week in March 2026, five companies — Aurascape, Traefik, Proofpoint, Qualys, and Strata — announced MCP-specific products. Traefik’s “Triple Gate” architecture makes the split explicit. This post explains why each gate exists, why MCP cannot piggyback on the other two, and where MCPProxy fits as a purpose-built MCP gateway.

The Three Gates

For fifteen years, API gateways have been the single choke point between clients and backend services. Kong, Traefik, NGINX, AWS API Gateway — the pattern is well understood. Every HTTP request passes through a reverse proxy that handles authentication, rate limiting, routing, and observability. One gate, one set of concerns.

Then LLMs arrived, and the API gateway pattern stretched but did not break. Services like Portkey, LiteLLM, and Helicone emerged as “AI gateways” — proxies that sit between your application and LLM providers, handling model routing, token budgets, prompt caching, and guardrails. These look superficially similar to API gateways, but the concerns are different enough that bolting them onto Kong or Traefik never worked cleanly. Token counting is not rate limiting. Prompt injection detection is not input validation. Model failover is not load balancing. The AI gateway became its own category.

Now MCP is forcing the same split again.

An MCP gateway sits between AI agents and the tools they invoke. It is not routing HTTP requests to microservices. It is not routing prompts to language models. It is routing tool calls to MCP servers — and the semantics of that routing are fundamentally different from both API and AI traffic.

Here is what each gate actually handles:

Gate 1: API Gateway — Manages traditional client-server HTTP traffic. Concerns: authentication, authorization, rate limiting, request/response transformation, service discovery, load balancing, TLS termination. The request is stateless, the protocol is HTTP, and the payload is structured data (JSON, protobuf, GraphQL). Kong, Traefik, NGINX, and cloud-native offerings like AWS API Gateway own this space. Mature, well-understood, battle-tested.

Gate 2: AI Gateway — Manages application-to-LLM traffic. Concerns: model routing and failover, token counting and budget enforcement, prompt caching, guardrails and content filtering, cost tracking, latency optimization. The request is a prompt or completion, the protocol is typically HTTP but the semantics are generative AI specific. Portkey, LiteLLM, Helicone, and now the AI-gateway features in Traefik and Cloudflare own this space. Growing fast, consolidating.

Gate 3: MCP Gateway — Manages agent-to-tool traffic. Concerns: tool discovery and selection, tool schema validation, execution isolation, quarantine and approval workflows, session management, bidirectional communication. The request is a tool call, the protocol is MCP (JSON-RPC over stdio/SSE/HTTP), and the payload includes both the invocation and the tool’s schema. This gate is the newest and the least understood. MCPProxy, Traefik’s MCP support, and the emerging wave of MCP security products are building here.

Why MCP Cannot Reuse Existing Gateways

The instinct is reasonable: “We already have gateways. Just add MCP support to Kong.” But the gap between API/AI gateways and what MCP needs is not a feature gap — it is an architectural mismatch. Four specific properties of MCP traffic make existing gateways a poor fit.

Stateful Sessions

API gateways are built around stateless request-response cycles. Each HTTP request is independent; the gateway routes it, applies policies, and forgets about it. AI gateways extend this slightly with conversation context, but the gateway itself typically does not maintain session state — it passes a conversation ID to the model provider.

MCP sessions are fundamentally stateful. When an agent connects to an MCP server, it establishes a session that persists across multiple tool calls. The server maintains state about available tools, active resources, and in-progress operations. The gateway cannot simply route individual requests — it must track the session, ensure subsequent calls reach the same server instance, and handle session lifecycle (creation, suspension, resumption, termination). This is closer to WebSocket session management than HTTP proxying, and most API gateways handle it poorly or not at all.

Bidirectional Communication

API gateways route unidirectional traffic: client sends request, server sends response. AI gateways do the same, sometimes with streaming (SSE) for token-by-token output.

MCP is bidirectional by design. The server can send notifications to the client — resource updates, progress events, schema changes. The MCP specification supports server-initiated messages that have no analogue in REST or typical LLM API patterns. A gateway that only understands request-response cannot correctly handle a server pushing a notifications/resources/updated event back to the agent. You need something that understands the MCP protocol at a semantic level, not just at the HTTP transport level.

Tool Schema Semantics

API gateways validate payloads against OpenAPI schemas. AI gateways validate prompts against content policies. Both operate on the content of individual messages.

MCP gateways must understand tool schemas — the JSON Schema definitions that describe each tool’s inputs, outputs, and capabilities. This is not just validation; it is discovery. When an agent asks “what tools can help me deploy this service?”, the gateway must search across all connected MCP servers, match the query against tool names, descriptions, and parameter schemas, and return a ranked list. This is a search problem, not a routing problem. BM25, semantic search, and hybrid approaches are the right primitives — not URL-pattern matching or header-based routing.

Quarantine and Trust Boundaries

API gateways enforce coarse-grained access control: this API key can access these endpoints. AI gateways enforce content policies: this prompt does not violate these guardrails.

MCP gateways face a harder problem. Tools are code, and code can be malicious. An MCP server that exposes a write_file tool could overwrite critical system files. A tool that claims to “search the web” could exfiltrate data to an external server. The gateway must implement quarantine — holding newly discovered tools in a restricted state until they are reviewed and approved — and execution isolation — running tool calls in sandboxed environments where they cannot damage the host system or access resources they should not.

This is not a policy you bolt onto an API gateway. It requires a fundamentally different trust model where the default posture is “deny until proven safe” rather than “allow unless explicitly blocked.”

The March 2026 Validation

If you needed evidence that the market agrees MCP needs its own gate, the third week of March 2026 provided it in concentrated form. In a span of seven days:

Aurascape launched an AI firewall with explicit MCP gateway capabilities, focusing on tool-call inspection and policy enforcement. Their pitch: enterprises deploying MCP servers internally face the same shadow-IT risks they faced with SaaS adoption, and they need visibility into which agents are calling which tools.

Traefik announced their “Triple Gate” architecture, formally recognizing three distinct gateway layers and shipping MCP-specific routing in Traefik Hub. This is significant because Traefik is one of the most widely deployed API gateways (50 billion+ requests/month across their user base). When Traefik says “MCP is a separate gate,” the infrastructure community listens. Their implementation includes MCP server discovery, tool-level routing, and integration with their existing middleware pipeline for auth and observability.

Proofpoint released MCP security tooling focused on prompt injection via tool responses — the scenario where a compromised MCP server returns malicious content in tool results that manipulates the agent’s behavior. This is an attack vector that does not exist in traditional API traffic and barely exists in direct LLM calls. It is unique to the agent-tool interaction layer.

Qualys announced TotalMCP, a scanning and auditing platform for MCP server deployments. Think “vulnerability scanner for MCP” — it crawls your MCP servers, catalogs exposed tools, identifies dangerous permission combinations, and flags tools that request overly broad system access.

Strata shipped MCP-aware identity and access management, extending their enterprise identity fabric to include tool-level authorization. Which agents can call which tools? Which human approvals are required for which tool categories? This is RBAC applied to the MCP layer.

Five companies, one week, all building for the same gate. This is not coincidence. It is the market recognizing a structural gap in the infrastructure stack.

MCPProxy’s Position

MCPProxy has been building for the MCP gate since before the term “Triple Gate” existed. The design choices we made — BM25 tool discovery, quarantine workflows, Docker isolation — were driven by the same architectural observations that are now becoming consensus.

Here is how MCPProxy maps to the MCP gateway requirements:

Tool Discovery via BM25

When an agent connects to MCPProxy, it does not receive a flat list of every tool across every upstream MCP server. Instead, MCPProxy indexes all tool schemas into a BM25 search index and exposes a search_tools capability. The agent describes what it needs in natural language, MCPProxy returns the most relevant tools ranked by relevance.

This is the discovery layer that API gateways lack entirely. Kong does not search its route table by semantic intent. MCPProxy does, because the MCP gate requires it.

# Adding an upstream MCP server

mcpproxy upstream add --name github --command "npx" --args "@modelcontextprotocol/server-github"

# Tools are automatically indexed and searchable

# Agent queries are matched via BM25 against tool names and descriptionsQuarantine by Default

Every newly discovered tool enters MCPProxy in a quarantined state. It is indexed and searchable, but it cannot be invoked until an administrator explicitly approves it. This is the “deny until proven safe” posture that the MCP gate demands.

# List quarantined tools awaiting approval

mcpproxy upstream list

# Approve specific tools after review

mcpproxy upstream approve --name github --tools "create_pull_request,list_issues"The quarantine model is particularly important for enterprise deployments where MCP servers may be added by different teams. A new MCP server exposing 50 tools should not automatically become available to every agent in the organization. The gateway must mediate that trust boundary.

Docker Isolation

MCPProxy can run upstream MCP servers inside Docker containers, providing OS-level isolation between tool execution and the host system. A tool that attempts to read /etc/passwd or make unauthorized network connections is contained within the sandbox.

This is execution isolation at the gateway layer — a concern that has no analogue in API or AI gateways. You do not sandbox HTTP requests. You do not sandbox LLM prompts. But you absolutely must sandbox arbitrary tool execution, because MCP tools are code running with real system access.

The CLI Workflow

MCPProxy is operated via a single binary with a straightforward command structure:

# Start the proxy

mcpproxy serve

# Manage upstreams

mcpproxy upstream add --name <name> --command <cmd> --args <args>

mcpproxy upstream list

mcpproxy upstream approve --name <name> --tools <tools>No YAML manifesto. No Helm charts. No web dashboard required. The operational model matches how developers actually manage infrastructure tools — from the terminal, with clear commands and predictable behavior.

What Convergence Looks Like

The natural question is whether the three gates stay separate or merge. History suggests a middle path.

API gateways and service meshes partially converged — Istio absorbed some gateway concerns, Traefik added service-mesh features — but they remain recognizably distinct products. The concerns overlap at the edges (mTLS, observability) but diverge at the core (HTTP routing vs. service-to-service traffic management).

AI gateways are following a similar arc. Cloudflare’s AI Gateway is bundled into their CDN product. Traefik is adding AI-specific middleware to their existing proxy. But standalone AI gateways like Portkey and LiteLLM continue to thrive because the model routing and token management concerns are specialized enough to justify dedicated tooling.

MCP gateways will likely follow the same pattern. Traefik’s Triple Gate bundles all three into a single platform — and for organizations already on Traefik, that will be attractive. But the tool discovery, quarantine, and isolation concerns are specialized enough that purpose-built solutions will coexist alongside the bundled offerings.

The analogy I keep coming back to: databases and caches are both “data stores,” but nobody seriously argues you should use PostgreSQL as your cache or Redis as your primary database. The access patterns are different enough that specialized tools win. API gateways, AI gateways, and MCP gateways are all “proxies,” but the traffic patterns are different enough that specialization will persist.

Three factors will determine how much convergence we actually see:

Protocol maturity. MCP is still early. The specification is evolving, transport layers are shifting (stdio to SSE to HTTP+Streamable), and the tool ecosystem is growing fast. Purpose-built gateways can iterate on MCP-specific features faster than general-purpose gateways that must maintain backward compatibility across three protocol types.

Enterprise procurement. Large organizations prefer fewer vendors. If Traefik or Kong ships “good enough” MCP gateway features, some enterprises will choose that over adding a new tool to their stack. “Good enough” is doing a lot of work in that sentence, though — quarantine and isolation are not features you want to be “good enough” at.

Security requirements. The Proofpoint and Qualys announcements signal that MCP security will become a compliance requirement, not just a best practice. When auditors start asking “how do you control which tools your AI agents can invoke?”, organizations will need purpose-built answers. General-purpose gateways with MCP bolted on will struggle to provide the audit trails and policy granularity that compliance demands.

Where This Goes

The three-gate model is not a theory. It is an observation of what the market is already building. Traefik named it. Aurascape, Proofpoint, Qualys, and Strata are building for it. MCPProxy has been shipping it.

If you are deploying MCP servers today — whether for internal developer tools, customer-facing AI agents, or autonomous workflows — you need a gateway that understands MCP at the protocol level. Not an API gateway with an MCP plugin. Not an AI gateway that also handles tool calls. A gateway built from the ground up for tool discovery, trust management, and execution safety.

The third gate is open. The question is what you put in front of it.

MCPProxy is open source and available at github.com/smart-mcp-proxy/mcpproxy-go. To get started: go install github.com/smart-mcp-proxy/mcpproxy-go@latest, then mcpproxy serve.